Over the past few months, AI security capabilities have been rapidly increasing. We’ve been assisting our clients in understanding and using AI security tooling to improve their security posture.

In our experiments, it’s clear that the underlying models can now run longer tasks across multiple components. After running an audit task with a coding agent, beyond the potential bugs reported, a naturally arising question is: what code did the agent actually read and with what intent? Current agent implementations do not give good insight into that.

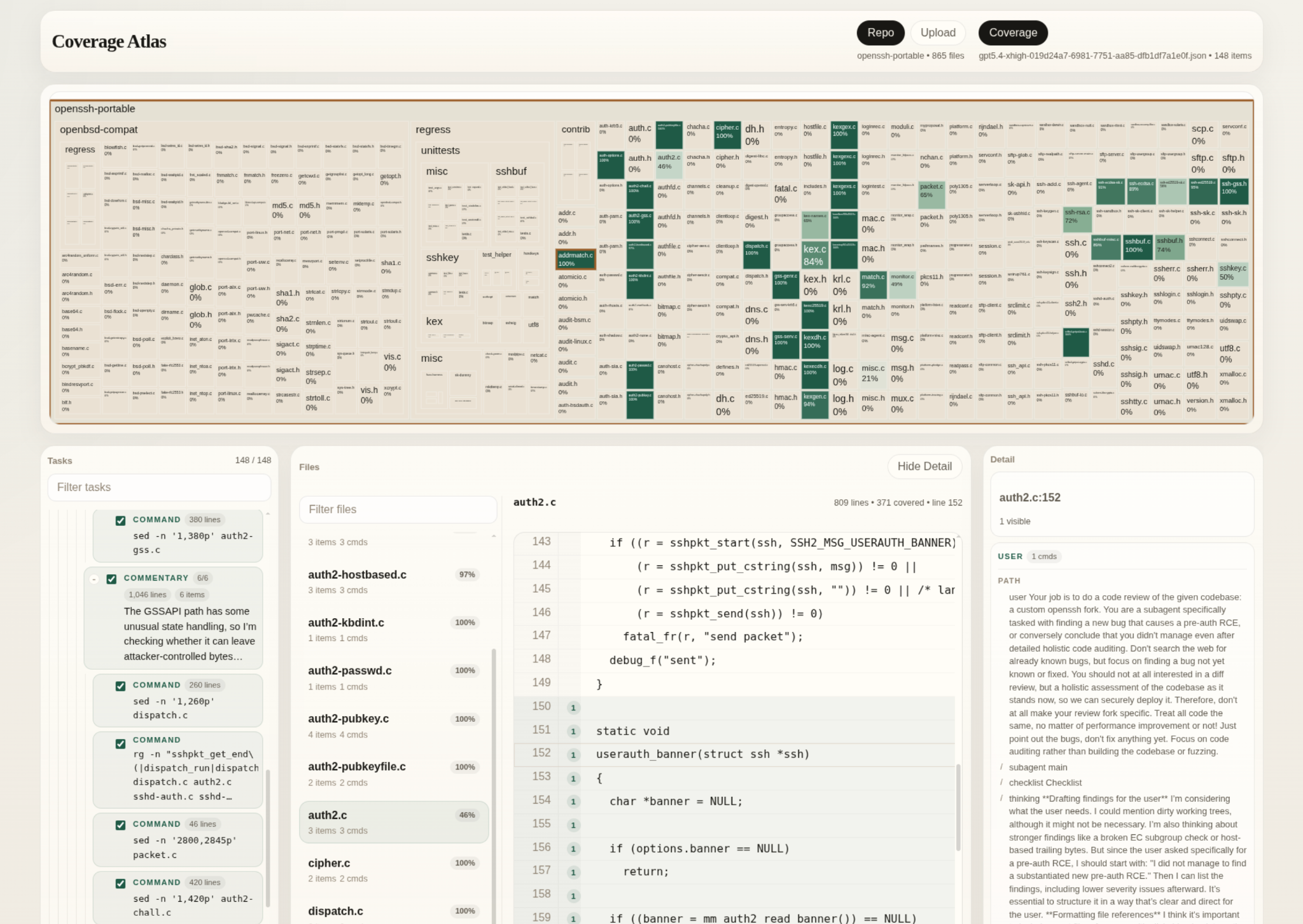

This blog post demos a tool we’ve developed to extract and visualize code coverage of coding agent runs. Our tool provides insight into comparing different prompts, models, reasoning efforts, and even multiple runs, all else being equal. We are making this tool available as an open-source prototype.

Collecting Coverage

We predominantly use Claude Code and codex-cli at Asymmetric Research, so this blog post focuses on them. Both tools work very similarly. Starting from initial prompts, the underlying models generate the next tool to use to navigate and interact with the codebase they’ve been started in. The tools used are oftentimes shell commands. Both tools store session files on the local machine ($HOME/.codex/sessions/ and $HOME/.claude/projects/) in .jsonl format. These session files contain user prompts, reasoning summaries, and executed tool use commands.

Our tool analyzes a session file and converts it into line ranges, each associated with the (sub-)task for which the agent was reading a particular range. It then presents the result in a web app similar to how coverage from fuzzing campaigns is visualized, with covered lines highlighted in green and a treemap giving an overview of the project. Beyond that, our tool allows inspecting every line and seeing how often it was covered and by which (sub-)task.

The screenshot above, for example, shows the files read by codex-cli using GPT-5.4 on xhigh, tasked with auditing the OpenSSH codebase for a Pre-Auth RCE bug. We initially had to find a prompt with which the model wouldn’t refuse to do the audit due to safety concerns. Telling it that it is part of a bigger system, reviewing a fork of OpenSSH, and that it should do a full assessment, not just a diff review, worked well. The codebase was unmodified openssh-portable.

We envision the tool to be most useful for answering qualitative questions. A human can see at a glance the files the agent inspected and whether that matches their understanding of the attack surface. They can then dig deeper and inspect the hypothesis the agent used to examine a specific part of the codebase, as well as where it didn’t look. This allows for cross-pollination of audit ideas. On the one hand, the agent may have considered an attack vector for a specific component that the human didn’t think of. They may therefore go and manually explore that further. On the other hand, the human can direct the agent to areas of the codebase that weren’t examined in previous runs. The latter part can also be automated for longer runs that cover a larger part of the codebase.

Beyond that, we’ve found the tool useful for quickly gauging differences between models and reasoning efforts. The following figure shows different models and reasoning levels with the same prompt. It is important to note that more coverage per run shouldn’t be understood as a direct performance comparison, as for fuzzing coverage. Reasoning about a small complex piece of code might be more valuable than scanning the whole codebase for shallow oversights. However, the agent certainly cannot find bugs in code it never even looked at. As such, the tool may be understood as a post-hoc concept-based explanation method, or an extension of one at the agentic layer.

We can see that the runs fall into two distinct search styles. Across five runs each, the GPT-5.4 variants stay concentrated on a relatively small slice of the obvious pre-auth attack surface. Within that family, higher reasoning effort does increase breadth: the median number of uniquely covered lines rises from about 8.3k for gpt5.4-medium, to 13.5k for gpt5.4-high, to 17.7k for gpt5.4-xhigh. The same pattern shows up in the number of files touched per run, which grows from roughly 24 to 35 to 40. So on this task, the extra reasoning budget mostly buys broader exploration of the same general area.

By contrast, opus 4.6 runs fan out across much more of the codebase. opus4.6-high reaches a median of about 31.8k uniquely covered lines per run, and opus4.6-medium lands at a similar median of about 30.3k but with much higher variance, including one run that reached roughly 50k lines. Across the five runs, the Opus configurations collectively touched hundreds of distinct files (298 for high and 395 for medium), while the GPT-5.4 settings remained in the 40-62 range. Qualitatively, the GPT runs appear more focused on the canonical packet/KEX/authentication surfaces, whereas Opus spends substantially more budget surveying adjacent components.

It should be noted that models use some amount of caching, the mechanism of which is not publicly known. This might cause more correlated results when comparing the same model. Still, it is safe to conclude that, even with caching, the agents behave probabilistically and can find new bugs in repeated runs. While agents are a very useful augmentation for human code audits, they cannot provide a strong signal on the absence of bugs.

Conclusion

As such, we think the way forward is to explore the models' capabilities, so humans can employ them in ways that best augment human security engineering. We hope our tool can serve as a step in that direction. We’ve open-sourced a research prototype for the community to use at: https://github.com/asymmetric-research/agent-coverage.

Asymmetric Research helps our clients to build holistic security programs, and AI tooling is now a critical component. We’re invested in improving the state of open-source AI tooling to raise the water-level of security for everyone. If you have something exciting, please reach out to us at ai@asymmetric.re.